Can We Trust What We See Online? Microsoft Thinks It Has an Answer

In an era of hyperrealistic deepfakes and AI-generated text flooding our feeds, a pressing question has emerged: how do we know what’s real anymore? Microsoft’s Chief Scientific Officer Eric Horvitz and his research team have released a new blueprint that may offer a meaningful path forward.

The plan centers on layering three complementary authentication methods — digital provenance manifests (a kind of paper trail documenting where content came from and how it changed hands), invisible watermarks readable by machines, and cryptographic fingerprints that capture unique characteristics of media like a mathematical signature. Think of it like authenticating a Rembrandt painting: you’d want documentation of its history, a hidden marker, and a scan of its brushstrokes. No single method is foolproof, but together they make deception significantly harder.

The Microsoft team evaluated 60 different combinations of these methods, modeling how each would hold up under different failure scenarios — from metadata being stripped to content being deliberately manipulated. The research introduces the concept of “sociotechnical provenance attacks,” where bad actors might use subtle AI edits to a real image in order to trick platforms into mislabeling it as AI-generated — a new category of manipulation the layered approach is designed to counter.

The foundation of this work is C2PA (Coalition for Content Provenance and Authenticity), a provenance standard Microsoft helped pioneer in 2019 and co-founded in 2021 to standardize media authenticity. The standard now has over 6,000 members including Adobe, Google, and OpenAI.

But will it actually be adopted? That’s where things get murky. Platforms like Meta and Google have already said they’d include labels for AI-generated content, but an audit found that only 30% of test posts across major platforms were correctly labeled. Digital forensics expert Hany Farid at UC Berkeley puts it bluntly: if major platform owners think AI-generated labels will reduce engagement, they’re incentivized not to use them.

There’s also a real risk of rushing the rollout. If labeling systems are inconsistently applied or frequently wrong, people could come to distrust them altogether — meaning it may sometimes be better to show nothing than a verdict that could be wrong.

Microsoft’s report is a serious, technically rigorous contribution to one of the thorniest problems of our time. But a blueprint is only as good as its builders. The technology exists. The question now is whether the industry has the will — and the incentive — to actually use it.

This topic was featured in Great News episode 32.

The Great News Podcast is your source for positive news, inspiring stories, and good news from around the world. We skip the doom and gloom of mainstream media to focus on scientific breakthroughs, environmental wins, and the inspiring news that proves the world is getting better. Join Andrew McGivern for a dose of optimism and uplifting stories that will change your perspective on human progress.

It is easy to find the

Keep looking for the good in the world, because it is not only there – its everywhere.

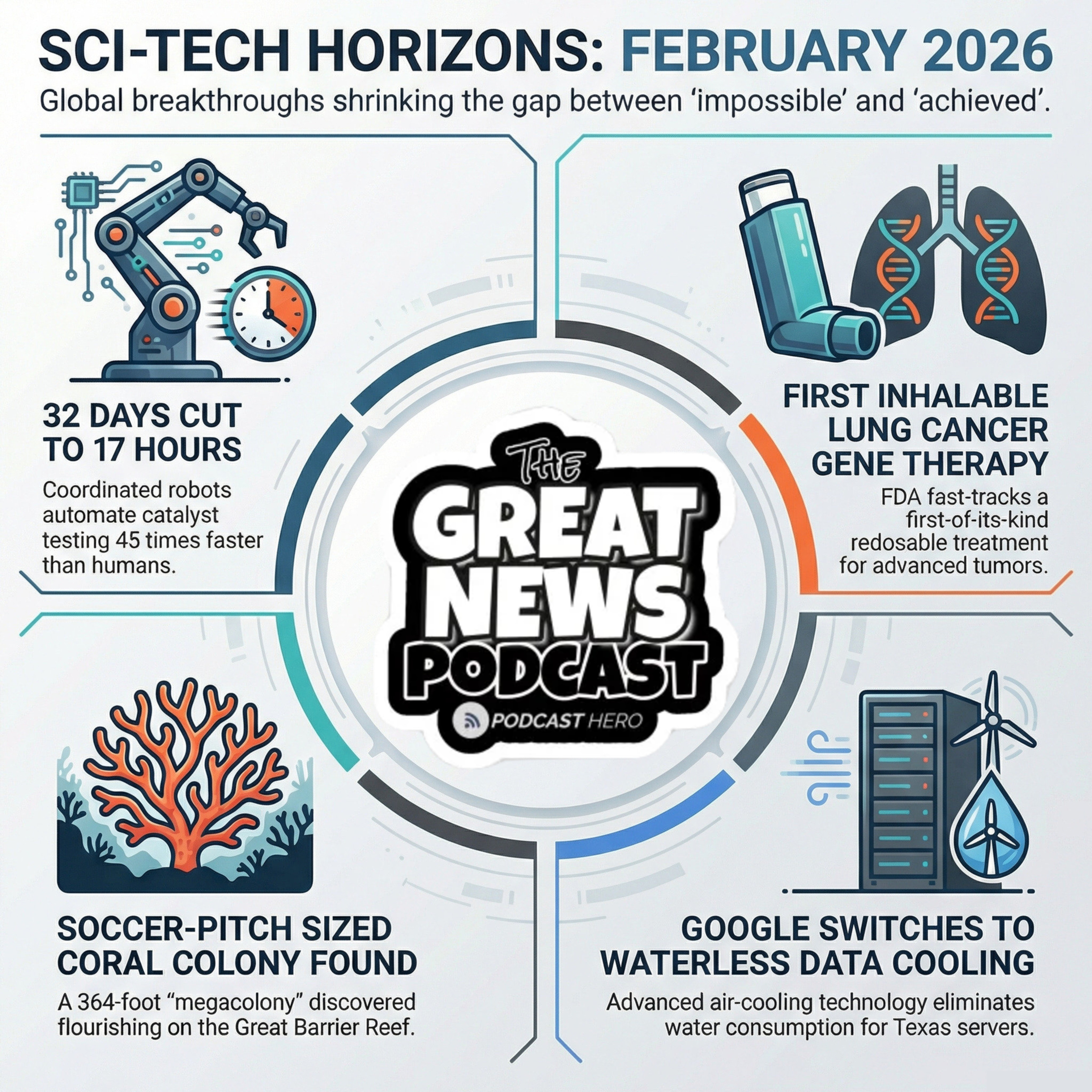

Today, we are diving into a ”fountain of youth” for the brain, a massive underwater discovery by a mother-daughter duo, and a plan to save the world's most popular fruit. Plus a new water free data center…

Can We Reprogram Our Way Out of Alzheimer's?

A Mother, a Daughter, and the World's Largest Coral Discovery

Protecting Commercial Bananas From Fungus

New AI Data Center to Use Zero Water?

And don't forget to stick around for the speed round:

Robots Are Transforming the Chemistry Lab — One Catalyst at a Time

Breathe In, Fight Back: The World's First Inhalable Gene

Therapy for Cancer Just Got Fast-Tracked

Why Your Funniest Teacher Was Probably Your Best Teacher

Source: MIT Technology Review