Electric semi-trucks could be mobile AI data centers!

Data centers are, almost by definition, boring. They sit in the desert, guzzle power, and hum quietly until something melts. But what if your data center could pull up outside, do its job, and leave when you’re done with it?

That’s the pitch from Belgian startup Windrose Electric. The company — best known for building long-haul electric semi-trucks — announced plans in early 2026 to repurpose its R700 electric truck as a mobile AI inference and energy platform. CEO Wen Han dubbed it “AI in a box”, and while the announcement was accompanied by AI-generated imagery rather than engineering blueprints, the underlying idea has turned heads for good reason.

What’s actually in the box?

The concept involves two standard ISO shipping containers loaded onto the R700’s flatbed. The first is an energy container housing a 4 MWh battery system capable of megawatt-level DC fast charging. The second is a compute container: a modular AI inference unit delivering up to 0.5 MW of processing power. Together, they form a self-contained compute-and-power unit that can be driven to wherever it’s needed — and driven away just as easily when demand shifts.

Why this is actually interesting

Containerized data centers aren’t a new idea — hyperscalers and military operators have used portable compute units for years. What Windrose is proposing pushes that concept further by making the transport layer part of the product itself. Rather than shipping containers to a fixed location, the truck is the deployment vehicle.

For edge computing and AI inference workloads specifically, this is a compelling fit. Many AI tasks don’t require permanent infrastructure — they require compute that’s available now, where you need it, and gone when you don’t.

The caveats worth keeping in mind

Windrose announced this via a LinkedIn post with AI-generated images, not a product launch or working prototype. One rendering even depicted a truck configuration the company doesn’t manufacture. This is a vision statement, not a delivery schedule.

There are also real engineering challenges: data center-grade hardware generates enormous heat, mobile cooling is tricky, and running high-density compute while a vehicle manages its battery adds complexity that renders don’t capture. None of that makes it a bad idea — it makes it an early one.

The bigger picture

The AI compute boom is creating real strain on traditional data center infrastructure. Power grids are struggling to keep up, permitting timelines are long, and demand is geographically unpredictable. Windrose’s “AI in a box” may be early-stage, but it’s pointing at something real: the future of AI infrastructure probably isn’t just bigger buildings in Nevada. It might also be something that can turn up at your loading dock on a Tuesday.

This topic was featured in Great News podcast episode 34.

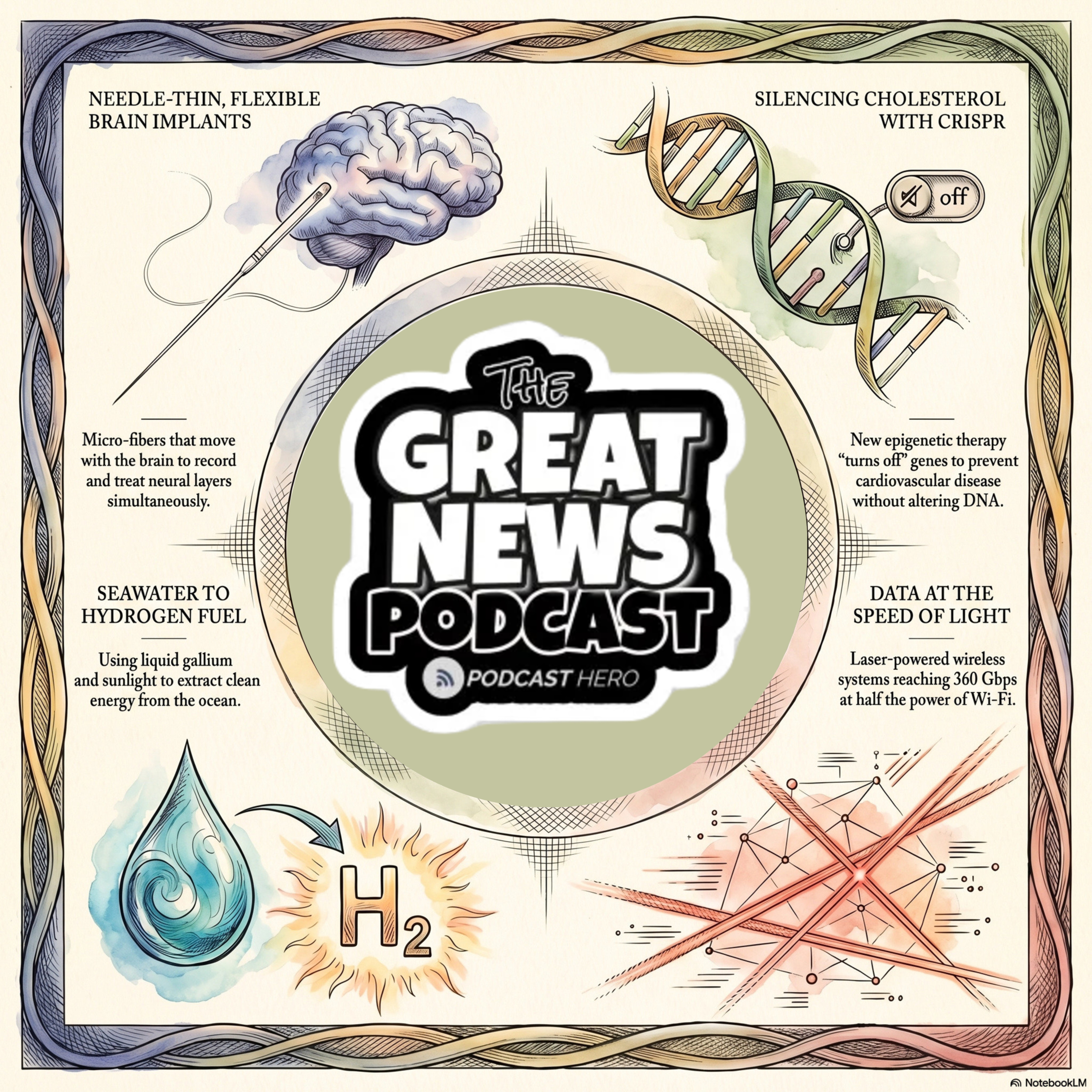

The Great News Podcast is your source for positive news, inspiring stories, and good news from around the world. We skip the doom and gloom of mainstream media to focus on scientific breakthroughs, environmental wins, and the inspiring news that proves the world is getting better. Join Andrew McGivern for a dose of optimism and uplifting stories that will change your perspective on human progress.

It is easy to find the

Keep looking for the good in the world, because it is not only there – its everywhere.

Welcome to the Great News podcast.

Tired of all the doom and gloom news from mainstream media? You'll get none of that here! Instead, you'll find inspiring stories and developments making the world a better place.

Brought to you by the Daily Quote, the podcast designed to kickstart your day in a positive way!

Today, we are diving into a massive regulatory shift that could save millions of lives, electric semi-trucks that double as mobile supercomputers, and a way to ”bottle” sunlight for use months later.

–Unlocking Personalized Medicine

–The Data Center that Drives Itself

–The Liquid That Could Change Solar Energy Forever

–The Tiny Green Machines Cleaning Our Water

And don't forget to stick around for the speed round, where we'll dive into even more great news!

–AI Just Beat Expert Doctors at Diagnosing Rare Diseases

–A Breakthrough in Parkinson’s Research

–The Philippines Recognizes Same-Sex Property Co-Ownership

–U.S. Organ Transplants Hit New Heights for the Fifth Year in a Row

Source: Interesting Engineering